|

After developing such a model, if additional values of the explanatory variables are collected without an accompanying response value, the fitted model can be used to make a prediction of the response. If the goal is error i.e variance reduction in prediction or forecasting, linear regression can be used to fit a predictive model to an observed data set of values of the response and explanatory variables.Most applications fall into one of the following two broad categories: Linear regression has many practical uses. This is because models which depend linearly on their unknown parameters are easier to fit than models which are non-linearly related to their parameters and because the statistical properties of the resulting estimators are easier to determine. Linear regression was the first type of regression analysis to be studied rigorously, and to be used extensively in practical applications. Like all forms of regression analysis, linear regression focuses on the conditional probability distribution of the response given the values of the predictors, rather than on the joint probability distribution of all of these variables, which is the domain of multivariate analysis. Most commonly, the conditional mean of the response given the values of the explanatory variables (or predictors) is assumed to be an affine function of those values less commonly, the conditional median or some other quantile is used. In linear regression, the relationships are modeled using linear predictor functions whose unknown model parameters are estimated from the data. If the explanatory variables are measured with error then errors-in-variables models are required, also known as measurement error models. This term is distinct from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable.

The case of one explanatory variable is called simple linear regression for more than one, the process is called multiple linear regression. In statistics, linear regression is a statistical model which estimates the linear relationship between a scalar response and one or more explanatory variables (also known as dependent and independent variables). Hypothesis testing can be done using our Hypothesis Testing Calculator.Statistical modeling method Part of a series on The two tests for signficance, t test and F test, are examples of hypothesis tests. One of the most important parts of regression is testing for significance. This is known as multiple regression, which can be solved using our Multiple Regression Calculator. However, we may want to include more than one independent vartiable to improve the predictive power of our regression. In a simple linear regression, there is only one independent variable (x). Confidence intervals will be narrower than prediction intervals. A prediction interval gives a range for the predicted value of y. The differennce between them is that a confidence interval gives a range for the expected value of y.

In both cases, the intervals will be narrowest near the mean of x and get wider the further they move from the mean. t TestĬonfidence intervals and predictions intervals can be constructed around the estimated regression line. The only difference will be the test statistic and the probability distribution used. In simple linear regression, the F test amounts to the same hypothesis test as the t test. The test statistic is then used to conduct the hypothesis, using a t distribution with n-2 degrees of freedom. So, given the value of any two sum of squares, the third one can be easily found.

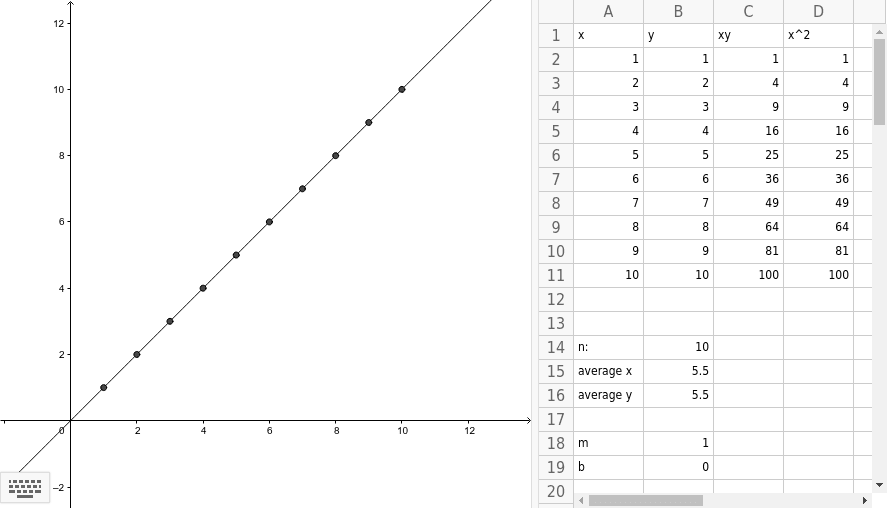

The relationship between them is given by SST = SSR + SSE. Before we can find the r 2, we must find the values of the three sum of squares: Sum of Squares Total (SST), Sum of Squares Regression (SSR) and Sum of Squares Error (SSE). The coefficient of determination, denoted r 2, provides a measure of goodness of fit for the estimated regression equation. The graph of the estimated regression equation is known as the estimated regression line.Īfter the estimated regression equation, the second most important aspect of simple linear regression is the coefficient of determination. The formulas for the slope and intercept are derived from the least squares method: min Σ(y - ŷ) 2. There are two things we need to get the estimated regression equation: the slope (b 1) and the intercept (b 0). Furthermore, it can be used to predict the value of y for a given value of x. It provides a mathematical relationship between the dependent variable (y) and the independent variable (x). In simple linear regression, the starting point is the estimated regression equation: ŷ = b 0 + b 1x.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed